OpenAI, the new language model family that can work in local hardware and is completely free GPT-OOSpublished. These models respond to basic questions such as who, when and with which devices can be used and offer developers open source options.

The most striking feature of the models is that an advanced artificial intelligence system becomes so easily accessible for the first time. GPT-OOS-20BCan work on a laptop with 16 GB of memory. Addressing more powerful equipment GPT-OOS -20B The model can be used with a GPU with 80 GB memory. Both models were shared as an open source with the Apache 2.0 license. Thus, software developers, institutions and individual users can customize and use these models in their infrastructure. Local use is offered without depending on any cloud service.

This move of OpenAI shows that only the language models that work in large data centers can now be used on smaller devices. GPT-Ours models can be downloaded not only with source codes, but also with model weights. This is particularly important for sectors that need to work in data privacy and private networks. Users can control how the model works on their systems. Moreover, models were optimized to work directly in desktop and laptops.

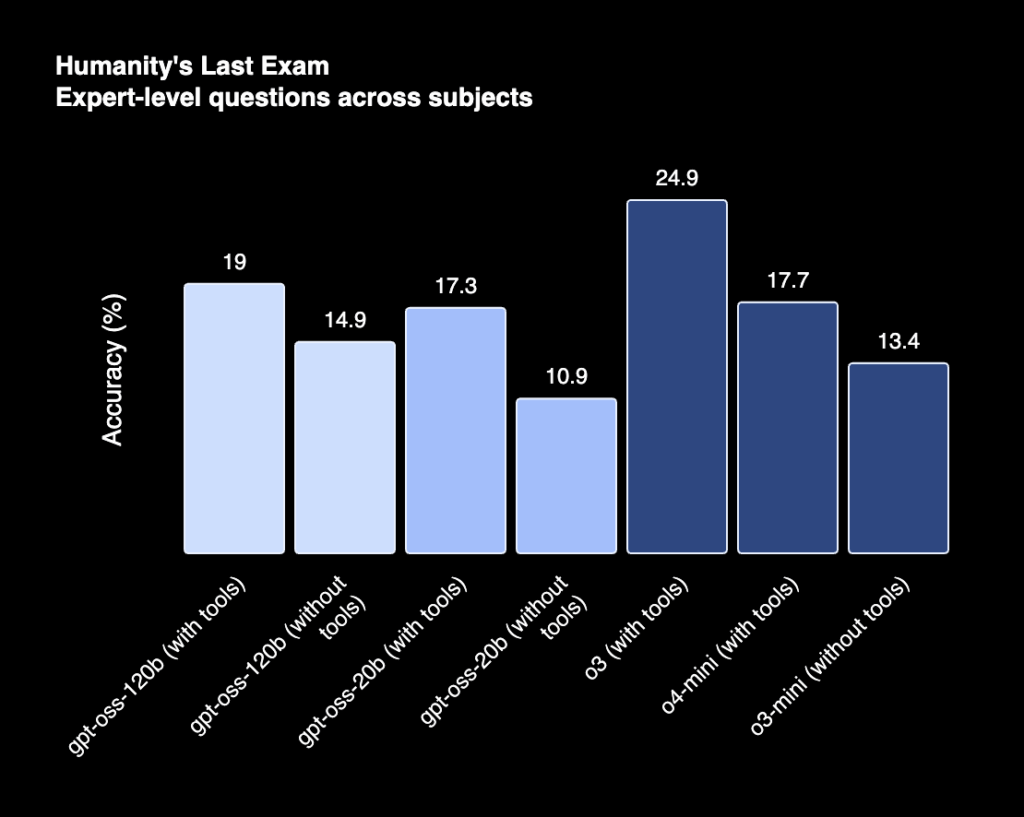

GPT-OOS-20B The model stands out with the need for low system. The 16 GB RAM adequacy offered by this model allows it to be used on many modern laptops. The success of the model, OpenAI’s previously introduced O3-Mini It reaches values close to the model. In common tests, similar accuracy rates are measured in areas such as coding and mathematics. Healthbench And AIME In special tests such as GPT-Ooss-20B leaves O3-Mini behind in some areas.

GPT-OOS -20B model can offer high success with a single GPU

Developed for larger scale use GPT-OOS -20B The model has a parameter of 117 billion. This model is designed to operate with only 5.1 billion active parameters. Thus, the high hardware requirement of large models was limited. A single graphic processor with 80 GB of memory allows this model to work with full capacity. GPT-OOS-120B, one of OpenAI’s registered models O4-Mini with similar results in various evaluations.

Model architecture was structured with transformer based. Only a small part of the parameters becomes activated during the process. This structure, Mixture-of-Experts (MOE) was created by the method known as. In both models, the context length can reach up to 128 thousand token. Efficiency has been increased by using techniques such as Rotary Positional Embedding (Rope) and grouped Multi-Query Attention.

OpenAI states that it uses a set of data often consisting of English and text -based data in the training of models. In this data set, scientific content, software data and general information issues are at the forefront. For the toketing process O200K_HARMony a special structure named. This system is an extended version of the token structure used in GPT – 4O models.

Both models offer different levels of reasoning skills in the inference processes. Developers can determine the “Thinking Time ği that they spend in creating the answer to the models at three levels (low, medium, high). This feature provides a great advantage in delayed applications. Longer and more detailed answers can be obtained in complex analyzes, while the model works faster in simple tasks.

Taubench, Healthbench And Gpqa The success of GPT-Ooss models in comparative tests such as such as comparative tests was measured. GPT-OOS -20BIn some scenarios, it gave results with higher accuracy rates than the registered GPT-4O model. This situation is more evident especially in mathematics questions at the level of health and competition.

OpenAI also states that model safety is given special importance. In addition to filtering harmful content during the training process, it is stated that models have undergone special tests against abuse scenarios. The company included external experts in this evaluation process. In this way, it is aimed to increase the safety level of open models.

OpenAI also shares sample codes and user manuals for developers who want models to be run on their computer. Prepared for Python and Rust platforms harmony The output format called it was also openly published. Models are optimized to work on Apple’s metal platform. In addition, there are distribution options such as Huging Face, Azure, AWS and Vercel.

GPT-Ooss models are offered with GPU for Windows users with the support of Microsoft. These versions working on Onnx Runtime, Foundry Local And Visual Studio Code developed for AI Toolkit made accessible. Thus, Windows developers can work directly with the model in their local systems.

Developers can run models in their own hardware, or use models on various cloud platforms. GPT-out models offer a flexible infrastructure that can be shaped according to different requirements. In addition, it is positioned as a solution that responds to the need for local privacy and independent development.

GPT-OOS Series published by OpenAI, free, open -source And Can work on the laptop It stands out with its structure. This paves the way for artificial intelligence technologies to reach more developers. In this way, individual users and small -scale teams can benefit from these models without high hardware investment.